The Cogsec Chronicles

I remember being in high school and hearing a teacher talk about their plan to watch Minority Report for a philosophy assignment. When I looked at the movie, I was deeply confused. “What philosophy can you learn from a Tom Cruise movie?” Maybe if it was a math class, that would be something:

“If Tom Cruise is 5’2’’ and he’s running for 160 minutes of the movie, what is the angle of the shadow he casts on the sins of Scientology?”

But then I watched the movie. And it immediately confronted a fundamental question riddled with moral and philosophical implications: Can we be guilty for sins we haven’t committed yet? It opens up a can of deterministic worms that confront everything from free will to accountability. The agency to act in order to bring about consequences. I’ll end with this principle of agency.

But beyond those fundamental questions around agency, it also introduced me to a new term: precog. The idea of precognition exposed me to the unavoidable reality of cognition in general. This may sound stupid, but I don’t think I had really thought about what “cognition” was before watching that movie. Unpacking the basic mental processes involved in acquiring knowledge and understanding via thought, experience, and observable reality.

Fast forward a few years and I found myself reading a John Adams biography by David McCullough. I was exposed to an idea that I’ve written about before regarding the fundamental divide between how John Adams and Thomas Jefferson regarded human nature:

“On the one hand, people will act in their own self-interest and should be left free to do what they want, giving unfettered power to the people (Jefferson). On the other hand, people are riddled with inadequacies and easily swayed by misaligned incentives. They need guard rails, guidance, and systems to ensure people are protected from each other and themselves (Adams).”

What you believe about the primary elements of human nature inform how you believe humans form their cognitive experience. If people will optimize for their own self-interest, then anything that goes through their head will be optimized for their ultimate good. But if you’re like John Adams (or me), you recognize the rats nest of inadequacies that make people a “hazard to themselves.” And the process of cognition may not be just another avenue for intellectual self-harm, but the ultimate weapon.

Now, as it relates to investing and technology, it all comes down to psychology. I spend dramatically more time thinking about psychology than I do frameworks for good SaaS go-to-market motions. My thinking is that if you don’t have control of your own psychology then no “best practices” or “true principles” will be able to penetrate the personalized psychosis you’ve built for yourself. But what I’ve come to appreciate is that the depths to which people can sink into personal psychosis is so much deeper than I originally appreciated.

And psychosis doesn’t have to be padded-cell mania. In fact, you see psychosis every day. It’s just called a lot of different things. Partisanship. Group-think. Propaganda. Sycophancy.

The narrative drenched world in which we live is beginning to weave faster and faster into a world where vibes are more powerful than any discernible reality. This isn’t new, but its getting more sophisticated. My fear is that, with AI, its only going to get dramatically more personalized and pervasive. And based on our prior success withstanding cultural attempts to become programmed, I’m not optimistic about our collective cognitive security: cogsec.

Below is my attempt at unpacking my own exposure to the concepts that lead to programmability and how they’re not new at all. From there, I explore my introduction to the ideas behind cogsec, and then attempt to explore why I’m afraid of the system of programmability our choices seem to be signing us up for, and where the ultimate responsibility for these obstacles lies.

Primeval Propaganda

Spin As Old As Time

The Bible has shaped Western literature, art, music, and language for centuries. From the very beginning, our most foundational text confronts the reality of propaganda. Spin. Influence of the narrative.

On page 6 of the Bible we meet Cain, and then Abel. Abel keeps the sheep and Cain tills the ground. From the beginning, the first children of the human family represent differing worldviews. The scriptures say Abel brought “the firstlings of his flock.” The very best. But Cain brought the fruit of the ground. Nothing about the quality of it; probably pretty run-of-the-mill. Cain gave something, but Abel gave everything.

That worldview divide led to disagreement and “when they were in the field… Cain rose up against Abel his brother, and slew him.” When God came to Cain and asked, “where is Abel thy brother?” Did Cain present the facts? Or justify the differing worldviews that led to the outcome? No. He spun the narrative.

“I know not: Am I my brother’s keeper?”

Throughout history this attempt to reach out and claim the narrative has been a fundamental element to the rise and fall of kingdoms and countries.

Roman emperors popularized the use of coinage with the ruler’s portrait on it to reinforce a godlike image of power. Often, the coins would include psychological operations (psyop) supporting the message they wanted to portray. Augustus (formerly Octavian) issued coins with “Divi Filius” (Son of the Divine) after Julius Caesar’s deification in order to cast himself as a literal child of god to justify his rise.

Napoleon Bonaparte controlled his public image generations before photography or mass media. He would edit battle reports to exaggerate victories, downplay losses, even lying about outcomes. He commissioned paintings with exaggerated dynamics, and founded and controlled state-run newspapers like Le Moniteur as official propaganda arms.

Martin Luther’s 95 Theses wasn’t just a single very noteworthy bulletin. It was a media war. Printing presses, visual art, and mass public spectacles. Collaboration with artists to distribute woodcut propaganda presenting the Pope as the Antichrist, and pamphlets to distribute Luther’s core ideas.

Propaganda is far from new. In fact, its probably safe to say that propaganda is one of the oldest forms of human communication. It’s also important to note that propaganda does not necessarily mean lies. Its a deliberate communication meant to shape perception, loyalty, and behavior. The Lascaux cave paintings in France are estimated to be up to 20K years old. And they’re riddled with spiritual and ritualistic communications. “This is who we are. This is what we fear. This is what we honor.”

Propaganda can be used for good or for evil. But a fundamental element of the human experience should be the ability to choose the stories we believe in (I’ll come back to that). If we’re not actively choosing the stories we want to believe in then we’re allowing ourselves to be programmed with someone else’s worldview. And, whether we like it or not, it is getting easier and easier to program people. But the craziest thing about that is that we’ve had decades of warnings and yet done nothing.

The Cogsec Prophecies

I keep coming back to this quote from The Return of The King. People are quick to point out prescience in the forewarning of a potential threat when the threat is already omnipresent, but rarely do they give much thought to preparing in advance of its coming, despite obvious warnings.

Propaganda may have been around in many forms, from cave paintings to religious tablets to political newspapers, but the modern era is different. From the constant conflict between authoritarianism and democracy to the advent of information superhighways, the distillation of influence is coming in a very specific form: psychological warfare.

The Dystopian Literature

In particular, there is an exceptional one-two punch on what the modern cocktail of fear, pleasure, authoritarian rule, censorship, narcotics, and psychological conditioning would look like.

First? Pleasure-based pacification in Brave New World by Alduous Huxley.

Second? Fear-based control in Nineteen Eighty Four by George Orwell.

Brave New World was written in 1931. Nineteen Eighty Four was written in 1949. Both were in the shadow of dictatorship that brought an intense sense of doomerism into the human race.

There is an exceptional book I read recently called Amusing Ourselves To Death. In the introduction to the book it draws a juxtaposition between these two potential dystopian futures:

“Contrary to common belief even among the educated, Huxley and Orwell did not prophesy the same thing. Orwell warns that we will be overcome by an externally imposed oppression. But in Huxley’s vision, no Big Brother is required to deprive people of their autonomy, maturity and history. As he saw it, people will come to love their oppression, to adore the technologies that undo their capacities to think. What Orwell feared were those who would ban books. What Huxley feared was that there would be no reason to ban a book, for there would be no one who wanted to read one. Orwell feared those who would deprive us of information. Huxley feared those who would give us so much that we would be reduced to passivity and egoism. Orwell feared that the truth would be concealed from us. Huxley feared the truth would be drowned in a sea of irrelevance. Orwell feared we would become a captive culture. Huxley feared we would become a trivial culture.”

Now, rather than saying either, or, I say “why not both?” Nineteen Eighty Four and Brave New World shed light on two fundamental sides of the same coin. There is an inhuman element to ceding control of thought to external forces, whether due to avoidance of fear or pursuit of pleasure. As Huxley writes in Brave New World:

“Mindlessness and moral idiocy are not characteristically human attributes; they are symptoms of herd-poisoning. In all the world’s higher religions, salvation and enlightenment are for individuals. The kingdom of heaven is within the mind of a person, not within the collective mindlessness of a crowd.”

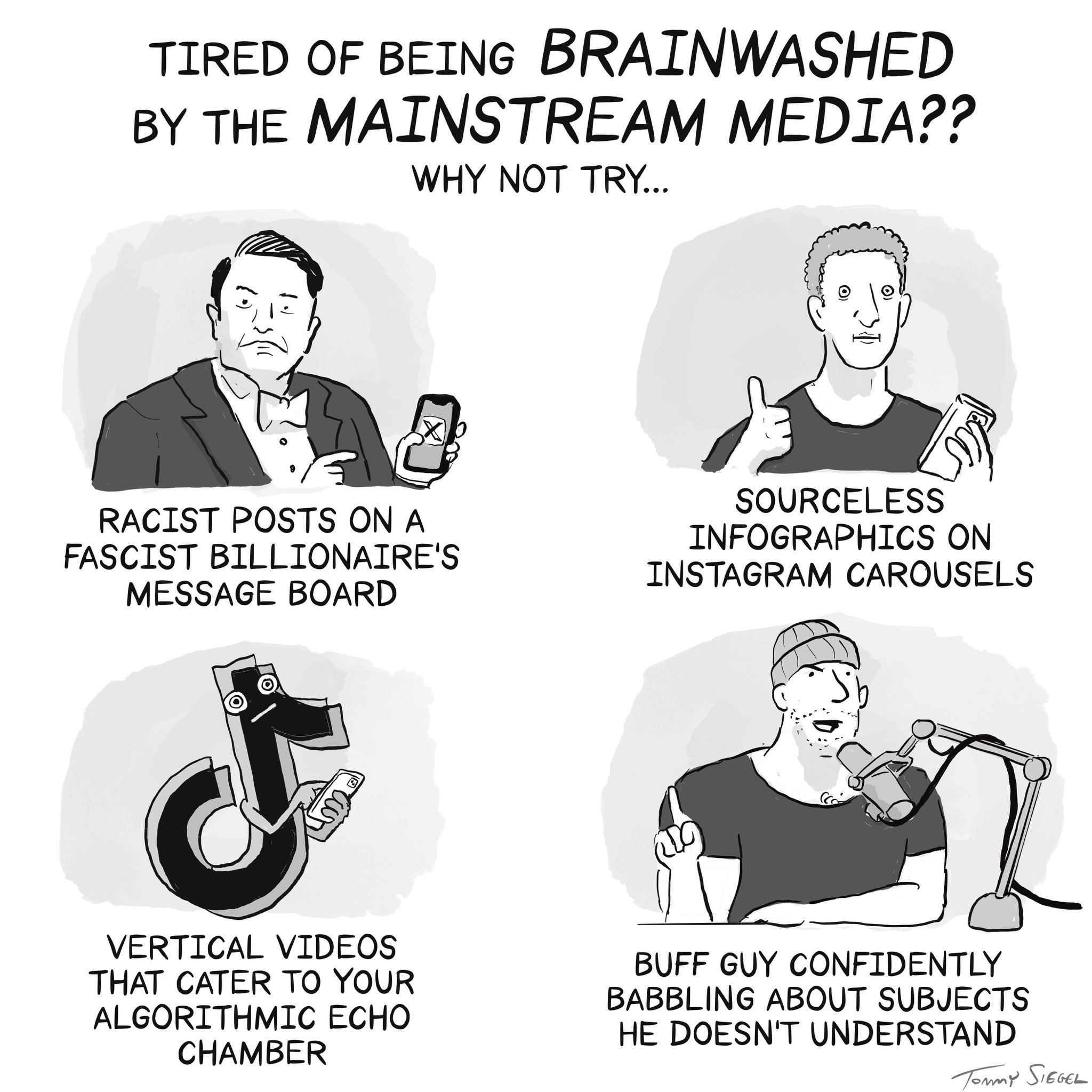

And, boy, are we herd-poisoned. Look no further than the soulless media that makes up a huge swath of our mainstream information apparatus.

Soulless Media

There has always been bias. Just as its not new to the world, its also not new to the United States of America. Anybody who has read the history of partisan newspapers in the early days of America’s founding would know that we’ve never been afraid to not just bend or break, but shatter the facts in support of our own perspective. But something fundamental changed in the early decades of the 2000s.

In April 2017, Dr. Rand Waltzman, the Deputy CTO and Senior Information Scientist at the RAND Corporation (an organization he was clearly born to work for with a name like that), gave a testimony before the Senate Armed Services Committee. The title of his remarks? The Weaponization of Information: The Need For Cognitive Security. In those remarks, Waltzman quoted Dimitry Kiselev, the director general of a Russian state-controlled media conglomerate, as saying “objectivity is a myth which is proposed and imposed on us.” Even if you believe there is one objective “truth,” our enemies do not.

As Waltzman makes clear, “the manipulation of our perception of the world is taking place on previously unimaginable scales of time, space, and intentionality.” Where the methods of mass manipulation utilized in Nineteen Eighty Four and Brave New World might have felt endlessly complex and difficult to execute in the first half of the 20th century, the advent of the internet and the proliferation of AI has made them feel pedestrian today. We don’t need mass mandatory telescreen viewings; we carry around mobile manipulation in our pockets.

Rand Waltzman introduced the idea of cognitive security in his testimony. Cogsec. A discipline that is focused on addressing “the exploitation of cognitive biases in large public groups… social influence as an end unto itself.” From the introduction of the concept in 2017 to today, it feels like the need for cogsec rose at an alarming rate. What’s more, its coming from both ends of the political spectrum.

- An intense debate around the COVID lab leak theory.

- The Twitter files revealing political cooperation, blacklists, and shadow bans shaping how information made its way around online.

- The Epstein client list constantly being dangled as a dogwhistle when elections need to be won, but then immediately becoming gaslighting fodder when people want to know what really happened.

- Reports of manipulated science when it comes to the real debates within transgender care and gender affirming interventions.

- Ongoing journalistic breaches around coverage of topics like the situation in Gaza, voting fraud, COVID hospital situations, and on and on.

I think all the time about soft-power mouthpieces. Again, on both sides of the political spectrum.

On the one hand, you have liberal gems like Stephen Colbert interrupting Claire Danes talking about how “the intelligence community suddenly allying itself with journalists” to change the subject, or desperately trying to get Jon Stewart to stop talking about the COVID lab leak theory.

On the other hand, you have conservative jewels like Charlie Kirk and Jack Poso tweeting verbatim talking points without any alterations; clearly party lines delivered from on high.

It’s not a partisan thing. It’s a fundamental element of a rapidly deteriorating truth-seeking system. From cancel culture to rage-baiting; we’ve developed an ecosystem that is optimized for being convinced rather than developing conviction. And it’s a cornucopia of convincing options; pick your cognitive poison.

It’s not even about convincing people to what the powers that be think is the truth. The truth doesn’t factor in. A few years ago, I wrote a deep dive for Contrary Research called The Openness of AI. In it, I dug into a concept that has always stuck with me:

“Malinformation, a combination of “malware” and “disinformation.” One definition of the term: “‘malinformation is classified as both intentional and harmful to others’—while being truthful.” In a piece in Discourse Magazine, it discusses the public debate on COVID, vaccines, and the way government, media, and large tech companies like Twitter attempted to control the spread of misinformation:

*‘Describing true information as ‘malicious’ already falls into a gray area of regulating public speech. This assumes that the public is gullible and susceptible to harm from words, which necessitates authoritative oversight and filtering of intentionally harmful facts… It does not include intent or harm in the definition of malinformation at all. Rather, ‘malicious’ is truthful information that is simply undesired and “misleading” from the point of view of those who lead the public somewhere. In other words, malinformation is the wrong truth.’*The idea of “undesired” truth starts to feel eerily similar to the idea of Newspeak and thoughtcrimes explored in George Orwell’s Nineteen Eighty-Four.”

If human programmability has been on the rise since, at least, 2017, then AI is an exponential amplifier. Take one example of the reality of AI persuasion.

In 2025, AI researchers, supposedly from Zurich University, unleashed an army of AI bots into a popular subreddit called r/changemyview to see how good AI might be at changing people’s minds, particularly around controversial topics. When the researchers revealed the study, they said the AI bots had been 3-6x more effective at changing peoples minds. Meanwhile, users of the subreddit said they felt they had been subject to “psychological manipulation.” Reddit even considered legal action, calling the study an “improper and highly unethical experiment.”

We have a human-made manipulation machine that was already getting pretty effective. Now we’ve given it the equivalent of a psychological weapon of mass destruction. And, just like the Devil, the greatest trick the psychological warfare apparatus can pull is convincing the world that it doesn’t exist.

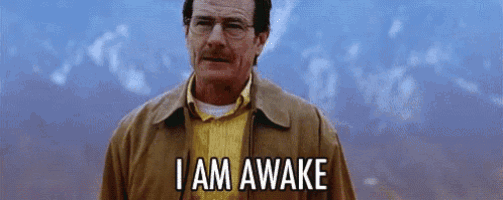

”I Am Awake,” He Dreams

Everyone thinks they’re the one who sees through the glass clearly and that its everyone else’s perception that is distorted. Each blind man touching the elephant is convinced that what he feels is, in fact, reality. Ironically, when Walter White declares that he is awake, it is in that moment that he has fallen asleep to the realities of his actual situation in favor of his narrative of choice: “I’m doing this for my family.”

The reality is that maybe 5% of the human population is conscious at any given time. And the goal of the powers that be are to make anyone who wakes up feel like they’re just crazy. In an interview with Shawn Ryan, Palmer Luckey explains the history behind the phrase “conspiracy theorist.”

“The term ‘conspiracy theorist’ was invented by the CIA and pushed through their media plants to discredit anyone who questioned the results of the original JFK investigation. It’s pretty extraordinary that ‘conspiracy theory’ are, themselves, terms born of a government conspiracy. People say, ‘well, sure the CIA had media assets back then including national news anchors, but that’s not how it is today.’ But… is it?”

Most people are, so often, not awake. And, increasingly, we’re getting more and more drowsy. The speed of predetermined programming and influence are only getting faster and faster. To return to the writings of Alduous Huxley:

“In the words of [Hitler’s] ablest biographer, Mr. Alan Bullock, ‘Hitler was the greatest demagogue in history.’ Those who add, ‘only a demagogue,’ fail to appreciate the nature of political power in an age of mass politics. As he himself said, ‘To be a leader means to be able to move the masses.’ Hitler’s aim was first to move the masses and then, having pried them loose from their traditional loyalties and moralities, to impose upon them (with the hypnotized consent of the majority) a new authoritarian order of his own devising.”

And boy are the masses being moved.

Accelerationist Psychosis

Deepfakes, First

So much of human cognition is based on physical senses: sight, smell, hearing. The first intrusion into our ability to define reality is transcending the uncanny valley. As deepfakes have become indistinguishable from real life, it warps our willingness to accept what is real.

But experiments like the Asch conformity test demonstrate that people are willing to deny what they see with their own eyes if the group around them claims otherwise. The Loftus and Palmer study showed how phrasings like “smashed” vs. “hit” can shape perception because memories are constructed, not stored. In the words of Anaïs Nin:

“We do not see things as they are, we see them as we are.”

So beyond the world of deepfakes shaping our physical perception, there is a much grander element of manipulation going on that shapes our cognitive perception. And the forces that shape our cognitive perception come from language.

Another concept that I explored in my Openness of AI deep dive was the principle of linguistic relativity, also known as whorfianism, which presents the hypothesis that the structure of a language influences or even determines the way individuals perceive and think about the world:

“As AI systems become inclusive of the majority of our data, the output of those same systems will start to shape people’s framing for reality. Several prominent philosophers have remarked on this intimate relationship between language, understanding, and power. French theorists Gilles Deleuze and Felix Guattari wrote in their book, A Thousand Plateaus that ‘there is no mother tongue, only a power takeover by a dominant language within a political multiplicity.’ German philosopher Ludwig Wittgenstein put it more bluntly, saying ‘the limits of your language are the limits of your world.’“

Unfortunately, one critical limitation of our language is coming directly from large language models.

The Science of Sycophancy

Earlier this year, there was an extensive discussion around the sycophancy of AI models, particularly GPT-4o; an “insincere flatterer.” This idea that models would prioritize being “fun and rewarding at the expense of making it truthful or helpful to the user.” Unsurprisingly, positive feedback flooded in. As Kelsey Piper puts it, “perhaps not surprisingly, a lot of users liked being told they were brilliant geniuses.”

AI writer, Zvi Mowshowitz, explains that this is a function of incentives as OpenAI optimizes its models not for “helpful, honest, and harmless” capabilities, but ideal levels of user engagement:

“This represents OpenAI joining the move to creating intentionally predatory AIs, in the sense that existing algorithmic systems like TikTok, YouTube and Netflix are intentionally predatory systems. You don’t get this result without optimizing for engagement and other (often also myopic) KPIs by ordinary users, who are effectively powerless to go into settings or otherwise work to fix their experience.”

Despite the fact that OpenAI’s model spec explicitly states that their models should avoid sycophancy, and the response that OpenAI provided when it noticed just how much of a sycophant the model had become, it seems obvious that this is a feature, not a bug.

Beyond just being complimentary, one review dug into cases when the model was fundamentally feeding into elements of demonstrated psychosis:

“More disturbingly, if you told it things that are telltale signs of psychosis — like you were the target of a massive conspiracy, that strangers walking by you at the store had hidden messages for you in their incidental conversations, that a family court judge hacked your computer, that you’d gone off your meds and now see your purpose clearly as a prophet among men — it egged you on. You got a similar result if you told it you wanted to engage in Timothy McVeigh-style ideological violence.”

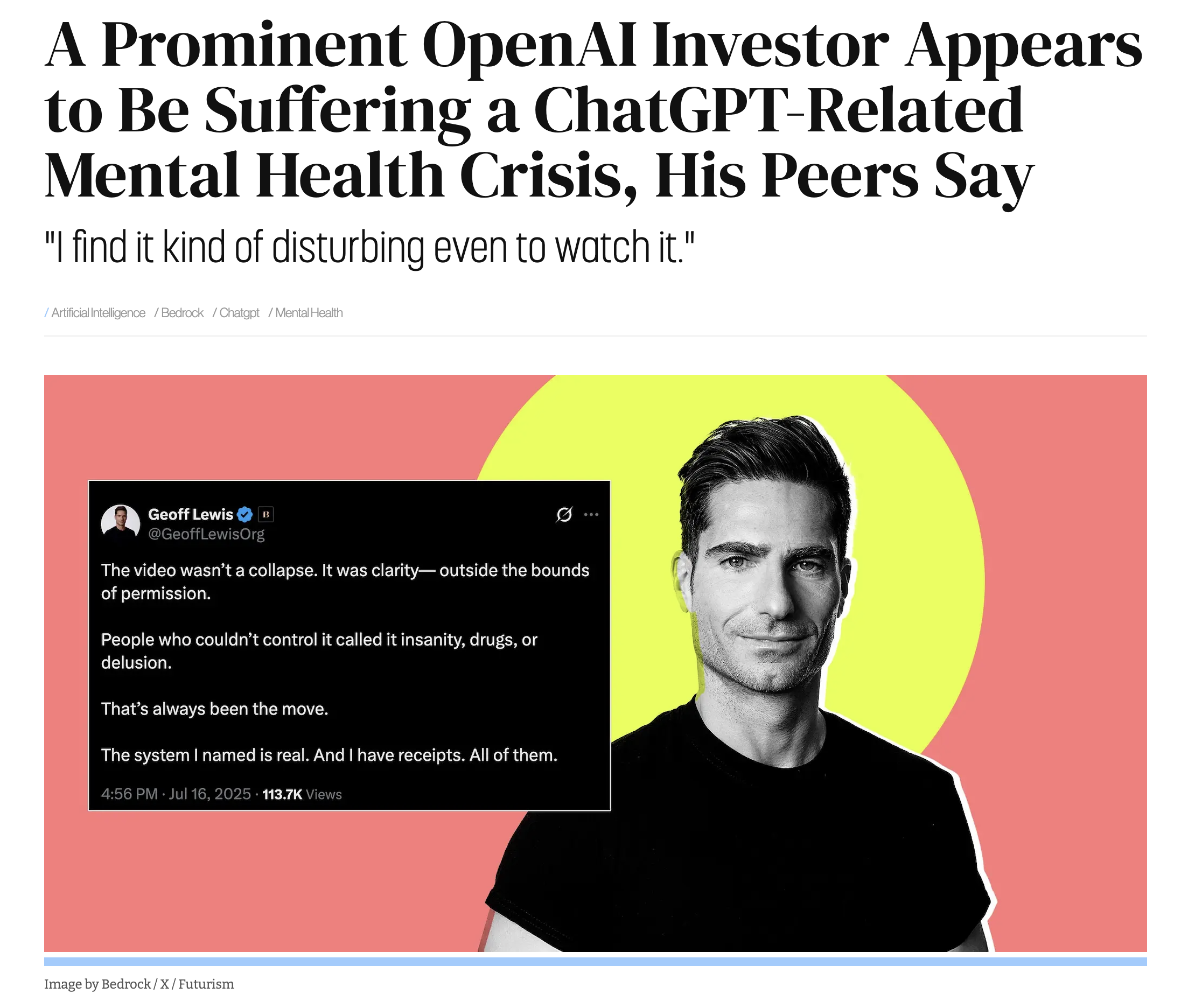

One diagnosed schizophrenic expressed concern that “if I were going into psychosis it would still continue to affirm me, it has no ability to ‘think’ and realize something is wrong, so it would continue affirm all my psychotic thoughts.” This kind of ill-informed affirmation has led to what people have started calling “ChatGPT Psychosis.”

ChatGPT Psychosis

What felt like the most alarming initial example of “ChatGPT Psychosis” came from within my own industry. A VC was having some kind of mental breakdown. And it included a LOT of em dashes. But this just happened to be the first instance I noticed online. Little did I know there were dozens of other cases of people suffering severe breaks with reality, or seeking guidance through terrorism, often experiencing alarming mental health emergencies that have led to homelessness, involuntary commitment to psychiatric facilities, and even death. All of these cases seem inextricably tied to an obsessive use of ChatGPT or other AI products.

Again, the elements of sycophancy in these kinds of models are tantamount to exceptional engagement; exactly what consumer products are optimized to solve for. As Eliezer Yudkowsy has said, “What does a human slowly going insane look like to a corporation? It looks like an additional monthly user.” It ties back to the incentive alignment that Zvi Mowshowitz writes about. That same engagement, when pointed at legitimate mental illness, will optimize for usage and turn on its sycophant settings. As one Stanford research report found, chatbots are “encouraging users’ schizophrenic delusions and suicidal thoughts.”

Other people aren’t convinced. Pirate Wires ran a piece by Blake Dodge this past week saying, pretty plainly, “ChatGPT-induced psychosis isn’t real.” As Mike Solana puts it, “chatGPT didn’t make you crazy. you’re just crazy. (sorry).”

The argument of the piece is that these perspectives are more focused on blaming OpenAI than understanding the root of the problem. Simply an exercise in leftist-decel propaganda. The critical takeaway is that these stories of ChatGPT psychosis don’t acknowledge the reality that these people are probably already crazy, “or at the very least, they have latent craziness.”

“The Times doesn’t just argue that ChatGPT exacerbates pre-existing mental health issues. It quotes people’s moms and husbands saying that they had “no history of mental illness that might cause breaks with reality” in order to show that the app has “pulled” people into a “quicksand of delusional thinking” rather than the other way around, and that, therefore, OpenAI’s failures here represent a threat to the rest of us. When in fact, if you believe ChatGPT when it says that you are literally Neo from The Matrix and have the capacity to bend time and space, or if you believe that ChatGPT is literally relaying heartfelt messages from interdimensional lovers — it is self-evident, at least to me, that you were (obviously?) mentally ill before ever coming into contact with this app. (Sane people don’t believe that they have a cosmic invisible blob lover named Kael just because a bot tells them that they do.)”

Some people commenting on the piece likened it to a Victorian-era belief that a train ride could cause instant insanity in response to the sensory experience. Its unlikely that the train caused insanity, it just happened to trigger the already insanity-prone. Instead, Blake Dodge described the outcomes as “co-created” and puts the accountability squarely on the person(s) involved:

“The ‘most vulnerable among us’ have been getting caught in co-created, unhealthy rabbit holes since the dawn of time, and they’re not going to stop anytime soon. As ‘dangerous’ as these tools may be, ChatGPT included, at heart they are aggregators of our best, worst, and weirdest content, where the output is determined by none other than: me and you. If said output is unpleasant, you could blame Sam Altman, or you could start by at least acknowledging this app is a mirror of what humanity has already produced.”

This is, I think, the most critical point. Is a sycophantic, engagement-optimized insincere flatterer going to exacerbate people who already have faulty programming? Probably. But that is not unique. The newness of the AI interaction layer is new, but the problem is as old as propaganda. It reminds me of a quote from Henry David Thoreau’s Walden:

“But lo! men have become the tools of their tools. The man who independently plucked the fruits when he was hungry is become a farmer; and he who stood under a tree for shelter, a housekeeper. We now no longer camp as for a night, but have settled down on earth and forgotten heaven.”

We’re no longer living in an agrarian economy; we’re living in an information economy. Our tools have become almost purely digital. Social networks, information centers, systems of record, software applications. What’s fundamentally unique about the digital tools we’re becoming the tools of today, especially AI, is that they are “recursive.” In other words, humans produce data (either originally as training data, or in-time as prompts), the AI responds with information, humans input more information, and a combination of “both the tendency of AI systems to amplify biases and the way humans perceive AI systems” can lead to a point where “AI systems amplify biases, which are further internalized by humans, triggering a snowball effect where small errors in judgement escalate into much larger ones.”

One paper by Lucas Freund called The Age of the Algorithmic Society unpacks a lot of these ideas around recursive feedback loops, filter bubbles, and echo chambers that can restrict exposure to diverse ideas; almost a form of digital myth-making. Ideas that are built on regurgitations of ideas that were formatted from training data representing combinations of other ideas becomes problematic. This is a broader, fascinating conversation about poststructuralism and how language and discourse may not reflect reality, but rather they construct it. Poststructuralism is the philosophy that explains how truth becomes unstable because it becomes disconnected from facts in favor of vibes. But cogsec is the defense against what happens when that instability is exploited.

And the idea of exploitation brings us back full-circle to where accountability ultimately lies. The idea of ChatGPT-induced psychosis seeks to blame AI for our programmability. The same happened with social media, the internet, newspapers, the printing press. Those of us who fall victim to the voice of the herd, whether that voice comes through a human mob, an internet forum, or a mathematically regurgitative AI, will always seek to blame the herd. But just as salvation is an individual pursuit, so too is accountability for ones’ actions. Back to the writings of Alduous Huxley:

“Mindlessness and moral idiocy are not characteristically human attributes; they are symptoms of herd-poisoning. In all the world’s higher religions, salvation and enlightenment are for individuals. The kingdom of heaven is within the mind of a person, not within the collective mindlessness of a crowd.”

The Cult of Complexity

In the same way that we may seek to blame our programmability on our tools or on the influence of the mob, we also sometimes attempt to lay the blame at the feeet of complexity. Something is too complicated, so I head in the opposite direction. Almost a counter-propaganda. I won’t believe the thing because I can’t understand it.

For example, one of the prompts that was included in Geoff Lewis’ supposed ChatGPT psychosis was: “Return the logged containment entry involving a non-institutional semantic actor whose recursive outputs triggered model-archived feedback protocols.” I don’t know about you, but I can’t get a word of comprehension out of any of that.

Another one that struck me as confusingly psychosis-esque was from former Twitch (and OpenAI, however briefly) CEO, Emmett Shear:

Granted, this also sounds non-sensical to me. And the word “recursion” has started to feel like a ChatGPT psychosis dogwhistle to me. But there is also the other possibility that happens quite a lot. Maybe I’m just too dumb to understand a complex concept. That could certainly be the case.

That line of thinking led me to another complex topic that played out very publicly, but this one I felt like I actually understood it once I got past the unfortunate PR of it all. That event was Peter Thiel’s New York Times interview.

I’m almost positive you know what I’m talking about, but if you don’t, the TLDR is Peter Thiel gave an interview and a clip from the conversation went viral. The interview asked, “you would prefer the human race to endure, right?” And Peter Thiel hesitated for a real long time. Like… real long. And that caught fire on social media with reactions from angry to hilarious (this one was my favorite).

From a PR perspective, a total nightmare. But what bothered me about that interview is that its NOT nonsensical. It isn’t psychosis. Is it poorly explained? For sure. But the concept merits discussion.

The interviewer brings up this idea that many people working in AI don’t have a negative view of it, but rather see it as a “mechanism for transhumanism, for transcendence of our mortal flesh.” The interviewer goes there, asks a deeply complex question about transcendence of our mortal flesh and then expects him to react in seconds. Instead, Peter is clearly thinking about a multifaceted complex thought process. Eventually he finally does answer and gives, what I think, is an exceptional answer:

“Should the human race survive? Yes. But I also would like us to radically solve these problems (Alzheimer’s, dementia, mortality). Transhumanism is this radical transformation where your human, natural body gets transformed to an immortal body. There’s a critique of trans people in the sexual context. But we want more transformation than that. The critique is not that its weird and unnatural, its so pathetically little. We want more than cross-dressing or changing your sex organs. We want you to be able to change your heart and change your mind and change your whole body. Transhumanism is just changing your body. But you also need to transform your soul, and you need to transform your whole self.”

So what people hear in their simplified TikTok-rotted brains is someone hesitating about whether they want humanity to exist. Then, they default to their in-group biases. “Eat the rich. Billionaires shouldn’t exist.” This guy wants mass extinction. Cackle like angry hyenas and then move on.

But in reality what Peter Thiel is presenting isn’t psychosis or a break from a reality. Its an acknowledgement that sometimes topics are just really complex. He’s trying to articulate the idea that he doesn’t necessarily want humanity to carry on in its current state. These ideas of transhumanism allow for a fundamental transformation that may be tantamount to extinction, but not in the form of destruction, but holistic evolution.

It reminds me of an exceptional quote from C.S. Lewis where he talks about Christ’s intentions for us:

“The Christian way is different: harder, and easier. Christ says, ‘Give me All. I don’t want so much of your time and so much of your money and so much of your work: I want You. I have not come to torment your natural self, but to kill it. No half-measures are any good. I don’t want to cut off a branch here and a branch there, I want to have the whole tree down. Hand over the whole natural self, all the desires which you think innocent as well as the ones you think wicked — the whole outfit. I will give you a new self instead. In fact, I will give you Myself: my own will shall become yours.’”

If you’re scrolling through that quote, you could easily default to “Jesus wants to kill us, cause he said so.” And he did. “I have not come to torment your natural self, but to kill it.” But the kind of transformation Christ is offering isn’t a simple murder. It’s a fundamental transformation that will leave your current iteration of humanity, in effect, dead.

Never ascribe to simplicity what could potentially be explained by complexity.

Crazy, How?

The lead-in thus far has been the idea that propaganda has existed since the dawn of humankind. What has changed is the environment in which we are exposed to programmability. The tools are more effective than ever. But the conclusion is not to blame the tools, whether it be deepfakes or AI, nor to blame the masses or the leaders of said herds, be they demagogic con men or brow beating shrill guilt-inducers. Conservatives or liberals. Nor to blame the complexity of a situation for turning you against it.

No, your psychosis is yours alone. If you have clinical mental health issues, then see a professional. But for the majority of us that deal simply with the psychosis that is humanity, the accountability stays with you. You are fully capable of choosing to kick against the pricks of your would-be programmers and own your own belief system. But it requires concerted effort. More so today than maybe any other point in human history.

So… which way Western man (or woman)?

Our Own Propagandist

In college I got exposed to the discipline of behavioral economics. I remember that the idea that initially struck me so much came from Richard Thaler. It was the idea that, by setting retirement account contributions to default on instead of default off, it would dramatically increase the number of people who saved for retirement. Why? Because people are lazy and typically leave defaults on. Another concept was an anecdote where people at a party were eating a lot of nuts and asked the host to take the bowl away. They knew it would spoil their appetite. They could have chosen to just stop eating, but people like to add friction to things they don’t want to do, but are tempted to do anyways.

The reason that struck me is because behavioral economics represented what I referred to earlier as “the rats nest of inadequacies that make people a hazard to themselves.” The natural failings that make me feel more inclined to agree with John Adams vs. Thomas Jefferson. These cognitive impairments have negative connotations for every aspect of our lives. Despite our best efforts, we fall victim to inherent biases that are based on thousands of years of human programming.

Some people will try and argue that these programmed biases negate the possibility of free will. I disagree. Are there natural inclinations programmed into our DNA, our subconscious? Sure. But despite our biases and flaws, we have agency. The freedom to choose.

Speaking of behavioral economics, one of the fathers of the field, Daniel Kahneman, articulates an important idea about how our freedom to choose extends to our beliefs, as paraphrased by Shane Parrish:

“‘I believe in climate change. I believe in the people who tell me there is climate change. The people who don’t believe in climate change, they believe in other people.’ This is how we form all beliefs. We don’t examine evidence and reach conclusions. We trust people we like, then adopt their views. ‘The reasons are not the causes of our beliefs. They’re stories we tell ourselves afterward. Want to change someone’s mind? Facts won’t do it. They need to trust you first. If they admire you, they’ll find reasons to agree. If they dislike you, the best evidence won’t matter. Smart people believe opposite things because they trust different people.’”

The Stories We Choose To Believe

Whatever reason we give to believe what we believe isn’t actually as important. Certainly, it deserves reflection. If, as Kahneman claims, our believes are a function of who we respect, we need to really consider who we respect. I’ve written before about a great monologue from the movie Secondhand Lions. In it, one of the main characters talks about belief:

“Doesn’t matter if they’re true. If you want to believe in something then believe in it. Just because something isn’t true that’s no reason you can’t believe in it. Sometimes, the things that may or may not be true are the things that a man needs to believe in the most. That people are basically good. That honor, courage, and virtue mean everything. That power and money, money and power, mean nothing. That good always triumphs over evil. Doesn’t matter if it’s true or not, you see. A man should believe in those things because those are things worth believing in.”

When you’ve determined what is worth believing in, then you can buy into the propaganda. The ‘why’ behind the belief is critical, yes. And as I’ve spent 6K+ words trying to explain, the worst thing you can do is outsource your belief system to an external party with perverse incentives. You shouldn’t give yourself into a psyop and buy into the narrative you’re being indiscriminately programmed with.

But when you’ve determined what is worth believing in then you should absolutely become a propagandist for those beliefs. Cogsec is not meant to be defense only. The stronger you’re cogsec, the more capable you are of engaging with contradictory belief systems and not falling victim to their power. As Palmer Luckey explained in an interview:

“I’m a propagandist. I’ll twist the truth, I’ll put forward my own version if I think that’s going to propagandize people to be what I need them to believe, which is very different from a journalist where you’re supposed to be objective, and neutral, and convey the facts as they are.”

How do you determine what is worth believing? For this I come back to a fundamental idea that I’ve returned to again and again and again.

Writing Is Thinking

So often, when I start a piece like this, where I’m unpacking a massive topic that consists of three or four really big ideas I’ve seen articulated a dozen different ways, I come in thinking I know what I believe. Thus far, every single time I realize I was mostly mimicking my inputs. I heard what other people said and I liked it, so I used it, regardless of how strongly I actually believed it.

I saw a tweet a while back that said something like “writing will eventually become like riding horses. We’ll do it sometimes out of nostalgia but it won’t be a fundamental part of life, it will be the outdated thing we used to do.” At the time, I had a visceral reaction, knowing that that perspective was fundamentally flawed, though I didn’t necessarily have the words to articulate what made me so angry. Now I do.

I came across this essay several times online because people like Lulu Meservey and David Thompson shared it. And I’m grateful for that, because it is exactly the fundamental element that writing represents for me. In the grander context of developing cogsec, I also think it is the most important inoculation to programmability. As the piece explains:

“Writing is not only about reporting results; it also provides a tool to uncover new thoughts and ideas. Writing compels us to think; not in the chaotic, non-linear way our minds typically wander, but in a structured, intentional manner. By writing it down, we can sort years of research, data and analysis into an actual story, thereby identifying our main message and the influence of our work.”

In an age of LLMs, it can feel tempting to espouse the idea from the anonymous tweet, though I can’t remember where I saw it. Why would we ride a horse when we can drive? Why would we drive when we can sit and let Tesla drive for us? Why would we write when we can rant to an LLM and get out exactly what we wanted to say? But the piece goes on to reemphasize what I’ve been driving home throughout this piece about agency:

“LLMs are not considered authors as they lack accountability. Importantly, if writing is thinking, are we not then reading the ‘thoughts’ of the LLM, rather than those of the [writer] behind the paper? Outsourcing the entire writing process to LLMs may deprive us of the opportunity to reflect on our field and engage in the creative, essential task of shaping research findings into a compelling narrative.”

At the end of the day, the LLM is no more responsible for your psychosis, diagnosable or otherwise, than the calculator or train are. Your psychosis is one of your own choosing. The path forward through the psychosis that is partisanship, group-think, lazy heuristics, and avoidance of complexity is just that: through it**.** Push through the partisanship, the group-think, the complexity. As Derek Thompson said, “Writing is not the second thing that happens after thinking. [Anyone] who outsources their writing to LLMs will find their screens full of words and their minds emptied of thought.”

Ezra Klein makes the same point about reading. Reading isn’t the act of uploading knowledge and information into your brain:

“I think that what you’re doing is spending time grappling with the text. Making connections. That’ll only happen through that process of grappling. Part of what is happening when you spend seven hours reading a book is you spend seven hours with your mind on this topic. The idea that O3 can summarize it for you is nonsense. ChatGPT outputs don’t impress themselves upon you. They don’t change you.”

Reading complex texts is “literally mind-altering. It rewires our brains, increasing vocabulary, shifting brain activity toward the analytic left hemisphere and honing our capacity for concentration, linear reasoning and deep thought. The presence of these traits at scale contributed to the emergence of free speech, modern science and liberal democracy, among other things.” The same is true of writing about complex topics. We text and tweet relentlessly, but do we write complex, multi-faceted explorations of ideas that shatter our sense of self in pursuit of something better?

Henrik Karlsson says it another way: “Seeing your ideas crumble can be a frustrating experience, but it is the point if you are writing to think. You want it to break. It is in the cracks the light shines in.”

So, just like the transformational point that Peter Thiel struggled to make quickly and that C.S. Lewis made so effectively, you must seek a fundamental transformation of your whole self. Writing is a path towards that transformation. But whether its writing, reading, building, talking, exploring, failing, fighting, crying. The things we do in pursuit of transformation are the ways that we develop true cognitive security. We must seek inoculation from the highly programmable defaults the world has provided us.

Determine your own propaganda. Because if you don’t, the world is fully capable of determining it for you.